**Update** After writing this article, and sending it to Twitter, they subsequently suspended up to 1800 accounts and are investigating more.

The recent de-nationalisation of Isa Qasim, Bahrain’s most prominent Shia cleric, sparked a massive public reaction in Bahrain and across the globe. The move, which made Isa Qasim stateless (ironically on World Refugee Day), is a common one in Bahrain. The government frequently remove the citizenship of those they deem to be dissidents.

As is the case with any public outcry, it didn’t take long before it reached Twitter. However, Twitter activity related to the Isa Qasim incident indicates that there are automated Twitter accounts attempting to justify the decision against Qasim. This, in turn, implies that a company, country, individual, or individuals, are using software to distort the informationscape by generating sectarian, anti-Shia, and anti-Iranian propaganda.

Why suspicious?

How did this suspicion arise? If you look at the below screenshot, you will notice a number of things. That is, there are numerous accounts all tweeting at similar times, the exact same text, without a suggestion of a retweet. These all appeared after I searched for ‘Isa Qasim’ on Twitter.

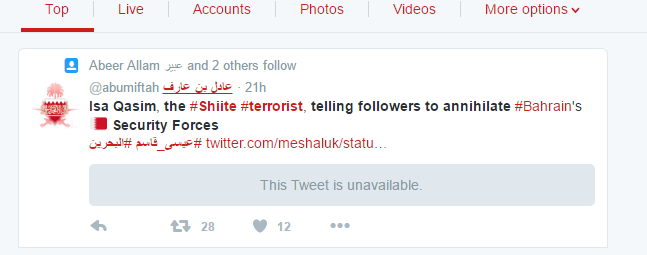

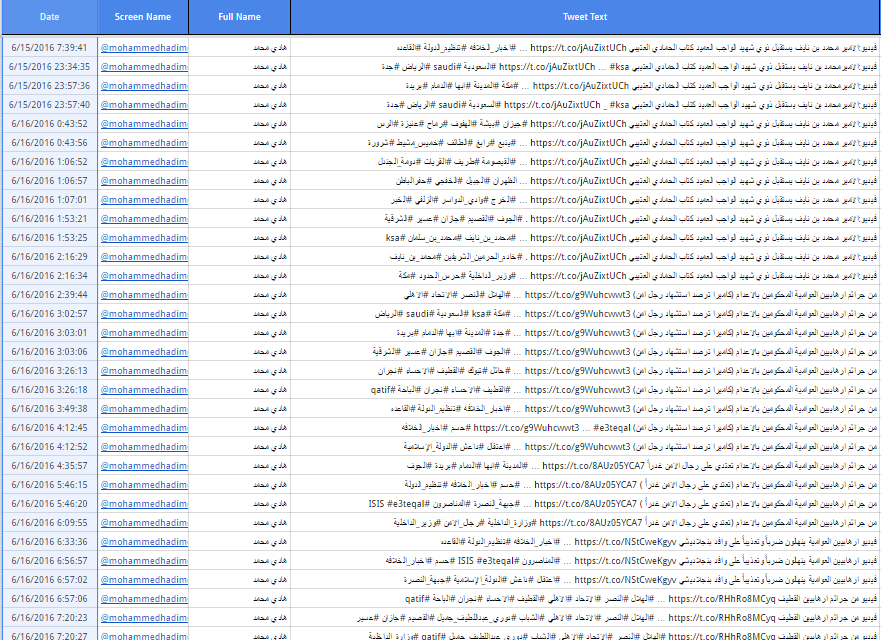

My curiosity piqued, I pulled some data from Twitter. Specifically, I requested from the Twitter API Tweets that included the phrase ‘Isa Qasim’ (I did other searches in Arabic and with different English spellings of Qasim, but let’s stick with this particularly one). I got 628 tweets back from the past few days. Of these, 219 were the exact same Tweet. That is, tweets with the text ‘Isa Qasim, the #Shiite #terrorist, telling followers to annihilate #Bahrain’s Security Forces’. I then copied these 219 tweets and put them in a separate spreadsheet for easier analysis. See below for a screenshot

Evidence to suggest these are fake accounts, or automated

There are a number of things that suggest quite strongly that the tweets are automated and the accounts managed by either a machine or a small number of people.

- Every one of those Tweets, with the exception of the first, (the original Tweet which was presumably copied), was launched from Tweet Deck – a program favoured by marketeers that allows one to manage multiple accounts from a single machine. In this case, this was the original:

- All the accounts have a similar, low number of followers and people they follow.

- All of the accounts were created in either February, March, June, or July of 2014, with the exception of a few, which were all created 18th or 19th February 2016.

- The accounts created in 2014 were also created across a span of two or three consecutive days within the same month. Here is an example: For those accounts created in February 2014, 7 were created on the 4th, while 10 were created on the 5th. For those created in March, 10 accounts were created on the 2nd march, 12 created on 3rd March, six created on 4th, 14 on 5th March etc. You get the picture…

- Accounts created on the same day tend to have a similar number of tweets. E.g. those accounts created on 2nd February 2014 all have about 400 Tweets to their name, all those created in March 2014 have about 3,500, and all those created in June 2014 have about 7000. Obviously this figure will change, yet the correlation between the date of account creation and number of tweets is interesting, especially given that the older accounts actually have less Tweets.

A snapshot of the spreadsheet - The apparent anomalous accounts, created in 2016, are actually anything but. The interesting thing about those accounts is that, unlike the other accounts, which have no biographical information or banner photo, the ones created in 2016 do have a bio, and a header image. What’s more, of the 43 accounts created in 2016, 14 were created on the 18th February, while the remainder were created on 19th February. Thus not only were they created on consecutive days like the other accounts, they bore the same characteristics of header image and biographical information.

- The biographical information that does occur is fairly generic. By doing a crudish corpus-based aggregation, and after removing a lot of commonly occurring prepositions, the most common word is ‘Allah’, and various other religious idioms or pleasantries. See the below word cloud.

9. When you copy one of the Arabic Tweets, and paste it into Twitter’s search facility, you get the Top Tweet, which in this case is the person who usually first Tweets it. What almost always happens is that the first person to Tweet the information was also one of the suspicious accounts. In particular, a lot of the recent tweets seem to originate with those suspicious accounts that were set up in 2016.

While this might just be a phenomenon within a cycle relating to recent tweets, it is more likely that the new accounts, which look more credible (i.e. have banner picture and biographical information) are control accounts, that mimic more credible sources, and are then copied by the rest of the bots. The only exception I have found so far is that the very few Tweets in English were copied from accounts that look legitimate. In addition to the English tweet examined above, another recent one included the text ‘The decision is a legal matter based on article 10c of #Bahrain’s Nationality #law , codified within Bahrain’s constitution’. This was written by Fawaz Al Khalifa, Bahrain’s Minister of Information, and presumably copied. The majority of the Tweets are in Arabic.

They all Tweet the same, but what do they Tweet?

Twitter limits API requests to Tweets that have occurred in the past week or so. However, we can still go to the individual accounts and look at their content for an insight into their most recent concerns, which will logically reflect the ideology of whoever is behind them. Since all these accounts appear to copy from a Master Tweet, they are all essentially Tweeting the exact same Tweets, albeit at different rates, times, and frequencies (presumably this is to give an air of human agency). However, if you take two random accounts from the above list, and extract their tweets from the past week, arrange them in alphabetical order or date order, you will see they essentially tweet the same things. The homogeneity again adds credence that they are bots with a specific, collective agenda.

The discourse ?

Although the big data only goes back a week, we can see most of the Tweets bunch around the same thing. They condemn ‘terrorist’ acts in Saudi’s mostly Shia Eastern province, and acts by the ‘Shia’ opposition in Bahrain. I use the term Shia here because it is mentioned frequently, as are derogatory terms, such as Rawafid (A term used to mean the Shia are ‘rejectionists’ of the true Islamic faith).This Tweet, for example, ‘رد قوي من شاعر سعودي على روافض’, translates as ‘strong response from Saudi poet against the Rawafid’ (A video was attached of the poem in question). Often times, Iran is invoked, or the concept of fitna (discord). I won’t go into too much detail about the exact content of the tweets but anyone is welcome to the data.

The relevant thing is that hundreds of what seem to be automated Twitter accounts are repeating propaganda that conflates acts of violence, terrorism, and unrest, with both Arab Shia and Iran. This strongly suggests that institutions, people, or agencies, with significant resources, are deliberately creating divisive, anti-Shia sectarian propaganda and disseminating it in a robotic, but voluminous fashion. The problems here are numerous, yet such accounts can not only contribute to sectarianism (hard to infer causal relations from this), but create the impression that polices, such as the denationalisation of Isa Qasim, have widespread popular support.

It is also interesting to note that among the many hashtags used by these accounts are Da’ish, the derogatory term used to describe the Islamic State. It could be that they wish to tap into that wider audience, in the hope that the message gets out – entirely possible given the generous hashtagging the accounts engage in. While the notion of bot accounts is probably not news to anyone, the evidence here hopefully highlights that much online sectarian discourse is perhaps inflated by those groups or individuals with specific ideological agendas, and the means to do so. Of course we know PR and reputation management companies offer such services, yet their work is often done secretively and behind close doors. Would be interesting to find out who is behind this.

It would also suggest that Twitter need to better regulate spam. On the plus side, if an account looks suspicious, you have an idea of what to look for in terms of date creation, Tweet numbers etc. Happy blocking 🙂

Leave a comment